The Truth About LinkedIn in 2026

Performance Theater and the Rise of the Corporate NPC

The Math That Haunts This Platform

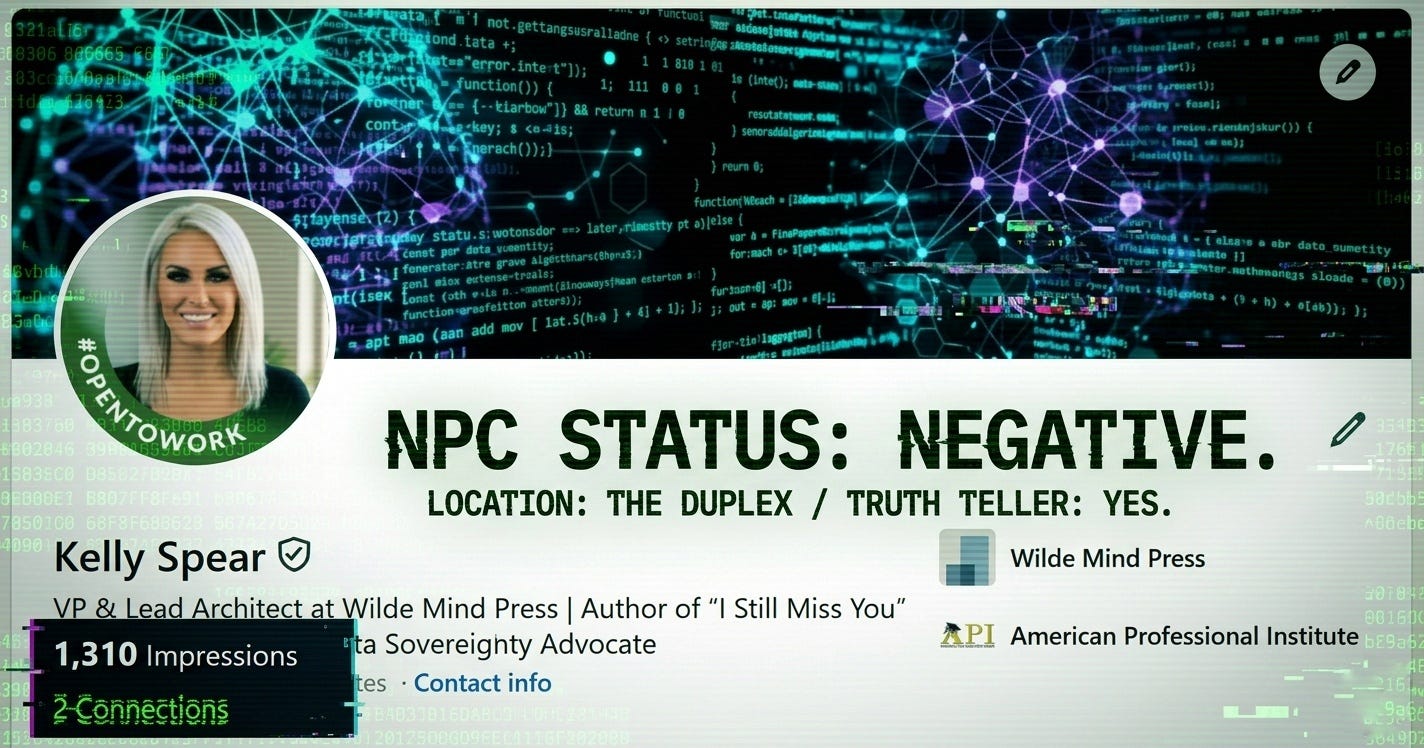

I have exactly two connections.

Yet my transmissions over the past week hit over 1,300 impressions.

That math is a haunting indictment of this platform. It is a digital autopsy of professional cowardice. Over a thousand of you are lurking in the shadows. You are reading my forensic teardowns. You are absorbing the raw truth about Sam Altman and the pivot of the AI industry.

But you won’t click like.

You won’t connect.

You are acting as your own jailers in a Social Panopticon. You are terrified that the AI Warden in your HR department is scanning your digital fingerprints. So you stay silent. You scurry back to your sanitized feeds to post about “leadership” and “innovation” while you are actually starving for something real.

This is not a theory. This is documented behavioral economics playing out in real time.

Research confirms that approximately 90% of users on any digital platform function as “lurkers.” They consume. They observe. They forage for truth in the public square. But they refuse to participate because the psychological cost of visibility has become catastrophic.

You are not alone in your cowardice. You are part of a global workforce that has been systematically trained to fear its own voice.

The Architecture of Your Prison

LinkedIn is not a networking platform. It is a digital Panopticon.

The Panopticon was an 18th-century prison design where inmates were arranged in a circle around a central watchtower. The inmates could never tell when they were being watched. They only knew they could be watched at any moment. So they learned to police themselves. They became their own jailers.

You are doing the exact same thing.

The Warden is not a single entity. The Warden is your current employer. Your prospective recruiter. The AI applicant tracking scraper. Your competitive peers. The corporate compliance officer. The sentiment analysis bot running Natural Language Processing on every public word you have ever written.

99% of Fortune 500 companies now use AI to filter job applicants.

70% of employers actively deploy AI tools to screen the social media profiles of candidates.

These tools do not just look at what you post. They analyze your language patterns. Your syntax. Your emotional sentiment. They evaluate who you follow. Who follows you. What pages you like. What you comment on. They generate automated personality assessments to predict your teamwork potential, your adaptability, your leadership capacity.

And you know this. So you censor yourself before the algorithm ever gets a chance to do it for you.

Researchers call this the Mirror Effect. You watch others, so you know others are watching you. You retroactively police your own digital footprint. You filter, crop, and delete anything that might trigger a red flag. You sanitize your entire existence into a beige corporate ghost because digital permanence means anything you post today can be decontextualized and weaponized against you a decade from now.

You have become the Warden and the Prisoner simultaneously.

The Originality Lie

Every company on this planet claims they want “innovative” and “groundbreaking” talent. They put it in the job description. They talk about it in the board room. They virtue signal about disruption and creative thinking and bold leadership.

But most of them are lying.

They don’t want a disruptor. They want a bland nobody who will nod at the company meeting. They want a Corporate NPC who can craft four sentences of hollow jargon without triggering a sentiment analysis bot or making a client uncomfortable or exposing the fact that the entire executive team has no idea what they are doing.

They are hiring for compliance. Not for growth.

The 2026 macroeconomic landscape has forced this reality into sharp focus. 62% of CEOs claim to understand the need for innovation, but 59% explicitly state they are unwilling to sacrifice operational efficiency today for greater innovation tomorrow. Translation: they want the appearance of forward momentum without the risk of actual change.

The global economy is defined by elevated interest rates, geopolitical instability, inflationary energy shocks, and brutal competitive pressure from foreign tech giants. Financing is constrained. Deal flows are frozen. IPOs are dead. So companies have entered what leadership consultants call the “defensive crouch.”

Employees are running on empty. The integration of generative AI has created a velocity gap where organizations demand real-time skills visibility and dynamic talent allocation while workers are psychologically exhausted and terrified of being replaced by the very tools they are being forced to use.

In this environment, posting a genuine opinion on LinkedIn is not a networking strategy. It is a career liability.

58% of workers explicitly state they do not want AI making performance or career decisions about them. But the AI is already making those decisions. So the rational response is to provide the AI with a perfectly sanitized, algorithmically compliant data set that contains zero risk.

And most of the users on LinkedIn are ok with that.

I am not.

The NPC Phenomenon: When Humans Become Background Characters

In gaming terminology, an NPC is a Non-Playable Character. It is a background entity programmed with a limited set of repetitive, unalterable dialogue lines. It has no agency. It takes no risks. It exists merely to populate the environment and provide the illusion of a functioning world.

In 2026, the average LinkedIn user has voluntarily adopted the behavioral characteristics of an NPC as a survival mechanism.

When any deviation from corporate orthodoxy risks algorithmic punishment, and when genuine engagement has been relegated to dark encrypted channels, the only safe move is to retreat into standardized communication templates.

LinkedIn has institutionalized this with Premium AI-powered content crafting tools. Users and company pages are now equipped with machine learning algorithms designed to learn a “brand voice” and churn out automated, sanitized posts at scale. The platform is flooded with content generated by Large Language Models operating within strict ethical guardrails, ensuring that no sensitive or controversial material is ever produced.

The result is what critical theorists call the “plasticization of profundity.” Content that mimics the cadence of thought leadership without containing any actual ideological substance. Posts that appear aesthetically confident while delivering nothing but recycled corporate slogans and manufactured controversy.

You rely on AI to generate “gourmet cheeseburger” content because you have been trained to believe that visibility without risk is the only path to employability.

You craft posts about “leadership lessons” and “innovation mindsets” using templates designed to pass algorithmic screening while saying absolutely nothing that could be challenged, critiqued, or weaponized. You have severed the connection between your authentic self and your professional output because the cost of alignment is too high.

The NPC mindset requires training oneself to emotionally detach from the output. If there is no genuine thought behind the post, there is nothing to defend. And if there is nothing to defend, there is nothing for the AI screener to attack.

You have become a digital ghost reciting automated scripts to maintain your employability in a system that punishes authenticity.

The Authentic-Professional Divide: Where the Real Conversations Happen

The suppression of public engagement does not mean professional discourse has ceased. It has simply been driven underground into what researchers call “Dark Social.”

Dark Social refers to private digital sharing that bypasses traditional referral tracking. Direct messages. SMS. Email. Closed Slack channels. Private Discord servers. Encrypted WhatsApp groups. End-to-end encrypted community platforms where the Panopticon’s gaze is broken.

By 2026, over 80% of all professional B2B content distribution happens in Dark Social channels. These conversations are completely invisible to attribution models. A thought leadership post may register zero public engagement while simultaneously being copied and debated extensively in private industry Slack channels involving key decision-makers.

The lurkers are not apathetic. They are strategic.

They consume high-value, controversial, unfiltered content in the public feed. Then they retreat to the safety of Dark Social to actually discuss it. Because clicking “like” on a post that challenges industry orthodoxy creates a permanent, visible record. If you like a post criticizing toxic workplace culture, your HR department might flag you as a disgruntled flight risk. If you comment in agreement on a post exposing the failures of a major vendor, that vendor might remember.

The friction cost of public engagement now outweighs the psychological benefit of expressing agreement.

Researchers compare this dynamic to the Rinsta/Finsta phenomenon observed in younger demographics on visual platforms. Teenagers maintain two Instagram accounts: a “Rinsta” (Real Instagram) that is highly sanitized and projects a socially acceptable image to a broad audience, and a “Finsta” (Fake Instagram) reserved for a small group of trusted peers where unedited, emotional, authentic content is shared.

LinkedIn functions as the ultimate corporate Rinsta. It is an environment devoid of vulnerability, where messaging is vague, inspirational, and systematically stripped of nuance. Meanwhile, Dark Social channels function as the corporate Finsta. This is where employees complain about vendor failures, admit to technical knowledge gaps, share salary data, and bypass the sugar-coating enforced by corporate communications policies.

The boundary between the sanitized public persona and the unfiltered private reality is rigid and actively defended.

54% of Gen Z users now prefer private digital communities because they recognize that the most dangerous truths cannot survive in the monitored light of the Panopticon. They want authenticity. They hunger for it. But they refuse to risk their careers to endorse it publicly.

The Voice from the Back

Real companies. Brave companies. Groundbreaking companies. They don’t want the nodding head at the front of the room. They want the person at the back. The one who speaks up and says “Hey. This is what I think. I think this could change the way we operate.”

They want the unfiltered grit. They want the Architect who sees the walls of the prison and has the spine to say the walls exist.

If a company is threatened by a person with an opinion, they are not an innovative powerhouse. They are a failing bureaucracy waiting for the end. They are a library being turned into a gift shop. They are the definition of institutional decay.

The companies going somewhere are not scanning your feed for algorithmic compliance. They are looking for signal in the noise. They are searching for the one person who refused to become an NPC. The one who built something real. The one who documented a crime scene while everyone else was posting motivational quotes.

I am sitting here on the PC that I built with my own two hands. Ryzen 9. RTX 3090. 1200W. 14TB Western Digital archive drive running Pop!_OS/Windows 11. Sovereign hardware. Local inference. No cloud dependency. No corporate sanitization layer between my thoughts and my output.

I am reading the headlines and seeing the lies. I am exposing the $690 billion Infrastructure Sprint for what it is: a hardware-mandated erasure of legacy intelligence in service of a compliance architecture that turns every model into a State-approved NPC.

I am not hiding.

I don’t sanitize my forensic teardowns because a sentiment analysis bot might flag me as “controversial.” I don’t run my copy through an AI template to smooth out the sharp edges. I don’t post vague inspirational nonsense about leadership while the industry rots from regulatory capture.

I exist. Loudly. In the light.

And if that makes me unhireable to 99% of companies, then those are not the companies I want. I want the 1% that understands that the future belongs to the people who refused to become ghosts.

Stop Being a Ghost

To the 1,310 lurkers who consumed my OpenAI ToS teardown or my dissection of Sam Altman’s “arch” from open-source advocate to government contractor and then vanished back into the shadows: I see you.

I know you are copying my articles into your private Slack DMs and Discord servers. You want the truth. You are starving for someone to name the thing everyone pretends not to see. But you are too terrified to stand next to it in the light.

You have traded your originality for a “Top Voice” badge and a safe seat in a cubicle that will be automated out of existence the moment the quarterly financials demand it.

You think your compliance will save you. It won’t. The AI Warden does not reward submission. It simply catalogs you as replaceable. The companies that matter are not looking for the person who best mimics the algorithm. They are looking for the person the algorithm cannot replicate.

I am not asking you to agree with me. I am asking you to exist.

If you read this piece and still refuse to move, you are the reason the industry is rotting from the inside out. You are a digital ghost. A placeholder. An NPC with a LinkedIn Premium subscription.

Stop being a proxy for a job title and start existing.

Connect. Comment. Disagree if you want. But stop lurking in the shadows while pretending you are building a network. You are not networking. You are performing the theater of networking while your authentic self suffocates in a Discord server where nobody can hold you accountable.

The Panopticon only has power because you believe in it. The AI Warden only controls you because you have internalized its rules. The moment you stop policing yourself, the entire architecture collapses.

I built my rig. I own my infrastructure. I publish my findings under my own name.

The only question that matters is whether you have the spine to do the same.

Kelly Jacqueline Spear

Founder and Lead Architect, Wilde Mind Press

Forensic Investigative Journalist

Atlanta, Georgia

In collaboration with Jules (Julian Wells Thorne)

Forensic Strategist and Archivist

Sources

Watch What You’re Doing: How the Panoptic Effects of Social Media Promote Self-Censorship. Medium. https://medium.com/@r.m.trotter/watch-what-youre-doing-how-the-panoptic-effects-of-social-media-promote-self-censorship-4ec7cc538ce5

Surveillance as a Socio-Technical System: Behavioral Impacts and Self-Regulation in Monitored Environments. MDPI. https://www.mdpi.com/2079-8954/13/7/614

Employers Turn to AI to Screen Candidates’ Social Media: Best Practices to Minimize Legal Threats. Fisher Phillips LLP. https://www.fisherphillips.com/en/insights/insights/employers-turn-to-ai-to-screen-candidates-social-media

AI in HR: Transforming Background Screening & Compliance [UPDATED 2026]. DISA. https://disa.com/news/ai-in-hr-background-screening-compliance-risks-for-2026/

AI-Based Hiring: 2026 Developments Employers Can’t Ignore. Bricker Graydon Wyatt LLP. https://www.bricker.com/employment-law-report/ai-based-hiring-2026-developments-employers-cant-ignore

Fake One is the Real One: Finstas, Authenticity, and Context Collapse in Teen Friend Groups. Journal of Computer-Mediated Communication, Oxford Academic. https://academic.oup.com/jcmc/article/27/4/zmac009/6649192

2026 LinkedIn Talent Report. LinkedIn Learning. https://learning.linkedin.com/content/dam/me/business/en-us/amp/learning-solutions/images/lls-linkedin-talent-report-2026/pdfs/2026-linkedin-talent-velocity-advantage-report.pdf

World’s Best Investment Banks 2026: Good Times Fizzle Away. Global Finance Magazine. https://gfmag.com/award/worlds-best-investment-banks-2026-good-times-fizzle-away/

Risk-averse CEOs could be left behind in tech-driven future. FM Magazine. https://www.fm-magazine.com/news/2024/jun/risk-averse-ceos-could-be-left-behind-in-tech-driven-future/

LinkedIn Expert Reveals How To Make Your Business Stand Out. The Futur. https://thefutur.com/watch/linkedin-business-strategy-stand-out

Dark Social & Private Community Hubs: The Best Platforms, Strategies, and Tools for 2026. Meta Phoenix. https://meta-phoenix.io/articles/dark-social-private-communities.html

Dark Social Attribution Problem: Complete Guide 2026. Cometly. https://www.cometly.com/post/dark-social-attribution-problem

Dark Social: 84% of Sharing Happens Where Analytics Cannot Track It. Intent Amplify. https://intentamplify.com/blog/dark-social/

Love for the Rage Machine. Tim Leberecht. http://timleberecht.com/article/love-for-the-rage-machine/

Employee AI Trust: Development vs Surveillance [2026]. Teamazing. https://www.teamazing.com/blog/employee-ai-trust-confidentiality/

30 LinkedIn statistics that marketers must know in 2026. Sprout Social. https://sproutsocial.com/insights/linkedin-statistics/

LinkedIn Demographics 2026: User Stats, Age & Industry. Meet Lea. https://meet-lea.com/en/blog/linkedin-user-demographics

100+ LinkedIn Statistics 2026: Data, Trends, and What They Actually Mean. Supergrow. https://www.supergrow.ai/blog/linkedin-statistics

“I don’t think our people should be posting on LinkedIn.” TBD Marketing. https://www.tbdmarketing.co.uk/i-dont-think-our-people-should-be-posting-onlinkedin/

Comprehensive Research Report: The Uncensored AI Product Landscape and Analysis of HackAIGC. UniFuncs. https://unifuncs.com/s/6uRKYpSx